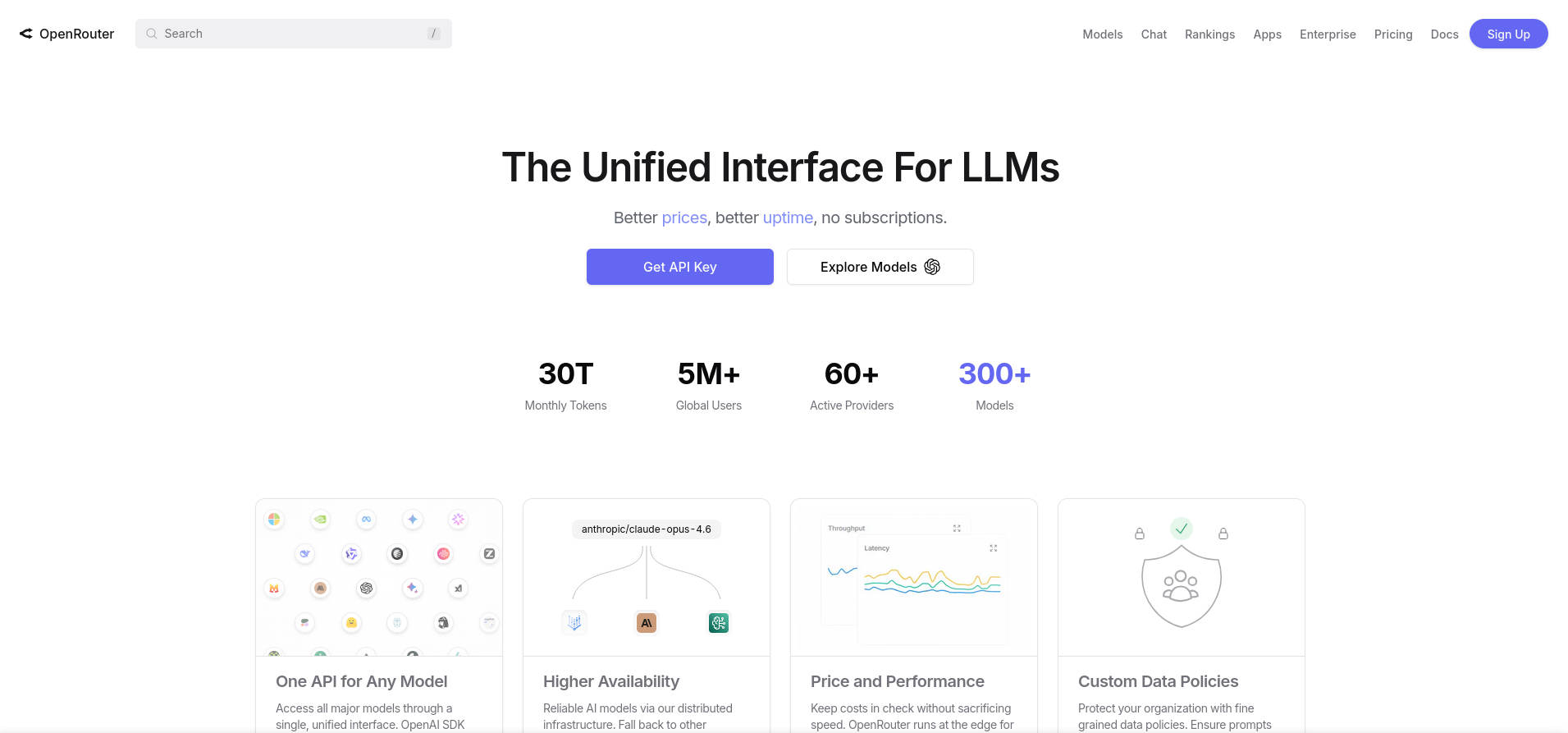

- OpenRouter consolidates access to hundreds of AI models from multiple providers using a single, unified API and centralized billing.

- The platform offers intelligent routing, failover mechanisms, and multimodal capabilities for seamless integration of text, image, and document processing.

- OpenRouter optimizes performance and cost-efficiency, allowing startups, enterprises, and researchers to create and scale innovative AI-powered applications effortlessly.

The world of artificial intelligence is evolving at a breathtaking speed, and developers today are faced with a dizzying array of large language models (LLMs) coming from various companies and providers. Choosing the best AI tool for your project often means juggling countless APIs, authentication systems, and pricing models. This complexity raises a key question: how can teams efficiently access and manage this expanding ecosystem of AI tools?

OpenRouter emerges as a powerful solution, offering a streamlined path to hundreds of AI models through a single, unified interface. Whether you’re prototyping a chatbot, building enterprise software, or conducting research, OpenRouter unlocks the potential of the AI world by bridging the gap between developers and a growing constellation of LLMs. This guide will explore the ins and outs of OpenRouter, explaining what makes it unique, how it works under the hood, its practical use cases, and why it matters for anyone working in modern AI.

What is OpenRouter? Unifying the Expanding AI Landscape

OpenRouter is a cutting-edge platform designed to simplify the experience of integrating, deploying, and using large language models (LLMs) from a multitude of providers. Rather than being a single AI model, OpenRouter functions as a central platform, connecting users to over 400 different AI models through one endpoint and a straightforward API.

Imagine having a single universal remote that controls every gadget in your living room—TV, sound system, lights, and more—instead of switching remotes for each device. That’s the essence of what OpenRouter brings to AI development. One account, one API, a galaxy of possibilities.

How to Create and Optimize RAG-Based Chatbots with n8n: Complete Guide and Best Practices

Why Was OpenRouter Created?

The rapid transformation of the AI field has created an opportunity and a challenge: Over the past couple of years, we’ve moved from a manageable range of AI models (OpenAI, Google, Anthropic) to a vibrant, fragmented marketplace with hundreds of options. For developers, this ‘Cambrian explosion’ means more choice but also more complexity—each provider has its own API, billing, and integration headaches.

OpenRouter’s core mission is to unify this scattered ecosystem into a single, coherent experience. No need to maintain dozens of separate accounts, endpoints, or track pricing nuances. With OpenRouter, developers enjoy:

- Centralized access to hundreds of LLMs from diverse providers, all through one endpoint.

- Simplified onboarding and authentication—sign up once and access the whole platform.

- Unified billing and usage tracking for clear, consolidated oversight of costs.

- Comparison and intelligent routing to choose the optimal AI model based on budget, speed, or specific needs.

- Robust support for reliability through automatic fallback chains and failover systems.

The Architecture: How Does OpenRouter Actually Work?

This is where OpenRouter’s magic comes into play—it introduces a sophisticated routing layer between your application and dozens of AI providers. Let’s break down the process step by step:

1. The Client Layer

Your application interacts with OpenRouter by making a single API call to its endpoint. You simply specify the prompt, your model of choice (or allow OpenRouter to decide), and other parameters (like temperature or token limits). The request format is intentionally similar to familiar APIs like OpenAI’s, ensuring little to no learning curve.

2. The Routing Layer

This is the heart of OpenRouter’s innovation. Upon receiving your request, the platform quickly analyzes:

- Your instructions (do you want a specific model or the cheapest option?)

- Current model pricing

- Performance and latency metrics across providers

- Uptime and real-time availability

- Historical performance data for each task type

OpenRouter consults this live data and routes your request to the best available option, optimizing for your chosen strategy. All this happens in milliseconds, with minimal overhead.

3. The Provider Layer

Once routed, the request is sent off to the specific LLM provider (OpenAI, Anthropic, Mistral, and many others). The provider processes the request as it would from any API consumer, then sends the response back to OpenRouter’s system.

4. The Response Layer

OpenRouter receives, normalizes, and delivers the response to your application in a standardized JSON format. If the selected provider fails or is slow, the platform can smoothly switch to a fallback model, keeping your applications reliable and available even under disruptions.

Making Your First Steps with OpenRouter: Onboarding and Basic Usage

Getting started with OpenRouter only requires a few steps:

- Sign up on using your email.

- Generate an API key via the dashboard under the ‘Keys’ section.

- Store your API key securely for use in development or production.

- Begin sending POST requests to the OpenRouter endpoint, just as you would for services like OpenAI’s GPT-4o—except that you can now tap into hundreds of models and let OpenRouter decide the best fit for each job.

The API design keeps things familiar: the endpoint is almost identical to provider-specific APIs, making migration or new development seamless.

Practical Features That Set OpenRouter Apart

A single API would be helpful by itself, but OpenRouter takes things to the next level with advanced features designed for real-world usage:

Auto-Routing

No need to manually test each model or hunt for the fastest or cheapest provider—just allow OpenRouter’s auto-router mode to decide based on your chosen priorities (cost, speed, reliability). By using a special parameter like openrouter/auto, the system selects and routes to the most suitable LLM option.

Streaming Output

When building chatbots or tools that require instant feedback, OpenRouter can stream responses token by token, delivering real-time interaction that’s vital for engaging user experiences.

Fallback Chains

Developers can specify a list of preferred models. If the primary model fails or is overloaded, OpenRouter automatically retries with the next best option, maximizing uptime and performance without manual intervention.

Error Handling

Reliable applications demand strong error management—OpenRouter standardizes error responses and provides robust mechanisms for retrying, timeouts, and detailed issue reporting.

Support for Multimodal AI: Beyond Just Text

One of OpenRouter’s most compelling features is its growing support for multimodal models. This means you’re not just limited to text anymore. The same API can handle tasks like:

- Image analysis and captioning—submit an image URL or base64-encoded file and get insights back.

- PDF processing and text extraction—send documents and receive structured outputs.

- Image generation—use providers like DALL-E and Stable Diffusion to create images from text prompts.

- OCR (Optical Character Recognition)—transcribe content from images or scanned documents.

This opens the door to building advanced, multimodal AI applications that can understand and create content across various formats—beyond just language.

Integration, Developer Experience, and API Insights

OpenRouter delivers a developer-centric experience by prioritizing simplicity, support, and compatibility. Here’s what stands out:

- SDKs in multiple languages make it easy for Python, JavaScript, and other communities to get started without reinventing the wheel.

- Comprehensive, clear documentation is available for all endpoints and features, lowering the barrier to entry.

- Easy migration paths ensure minimal effort for projects already using other AI APIs.

This developer focus is further enhanced by integration guides for popular platforms and compatibility with other AI infrastructure services, such as Cloudflare AI Gateway. For example, changing an endpoint for Cloudflare users involves a simple swap in the URL path, meaning existing integrations are quick to update.

Strategic Advantages for Businesses, Researchers, and Content Creators

Why does all this matter in the real world?

- Startups and SaaS builders can prototype AI-powered features using the full array of models, iterating rapidly, and exploring different providers without rewriting code for each change.

- Enterprises benefit from unified billing, centralized usage caps, and seamless access controls—all of which are essential for coordinating large, diverse product teams.

- Researchers can compare outputs across multiple LLMs for benchmarks, experiments, or publishing, all from one interface.

- Developers working on complex workflows (like chatbots, virtual assistants, or multi-step agents) can orchestrate these workflows across different models and modalities—text, vision, and document analysis.

- Open-source projects and libraries can rely on OpenRouter for plug-and-play access to new AI tools, ensuring their communities have futuristic capabilities out-of-the-box.

Core Features & Benefits of OpenRouter

- Unified Access to LLMs: Quickly connect to hundreds of AI models using a single account and a single API key.

- Real-Time Routing Logic: Benefit from routing based on real-time availability, pricing, and latency, ensuring requests always go to the best available model.

- Scalability and High Availability: OpenRouter is built to scale effortlessly and can handle traffic spikes with minimal overhead (only ~25 ms routing time), providing failover across 50+ cloud providers globally.

- Customization and Flexibility: You can specify preferred providers, control cost/latency tradeoffs, implement fallback orders, and tailor workflows for different applications.

- Comprehensive API and SDK Integration: Leverage tools and APIs that fit naturally into your tech stack, with robust support for popular programming languages.

- Ethical AI Usage and Security: OpenRouter is committed to responsible AI deployment, incorporating measures to prevent misuse and safeguard user data.

Comparing OpenRouter to the Alternatives

OpenRouter may seem similar to other unified access solutions like LiteLLM, but its scope and focus are distinct.

- OpenRouter’s main strength is the breadth and diversity of model support (over 400 models, and growing), combined with advanced routing intelligence and robust multi-provider fallback systems.

- LiteLLM and similar tools typically emphasize lightweight, on-premise use with a narrower model catalog, which may be better for local deployments or scenarios where ultra-low latency trumps variety.

This means that your choice should reflect whether you value flexibility, choice, and simplified API access (OpenRouter), or if you need highly controlled local deployment (LiteLLM).

Typical Use Cases Across Industries

- Business Operations: Automate customer support, extract insights from documents, and improve decision-making with AI-powered tools—without building from scratch for each provider.

- Academic Research: Access powerful LLMs for papers, experiments or teaching, comparing output quality rapidly to find the best model for your needs.

- Multimedia and Content Automation: Generate high-quality text, images, and even process PDFs with a few API calls, revolutionizing everything from marketing to publishing workflows.

- Seamless Integration with Existing Platforms: OpenRouter’s compatibility and integrations ensure businesses can slot in advanced AI without major infrastructure changes.

How Credits and Billing Work

OpenRouter simplifies what is often the biggest headache: tracking and managing costs across multiple AI APIs. All activity is billed using a unified credit system—each call consumes credits based on model, usage, and provider. The platform typically charges model-specific rates plus a small platform fee (~5%), and provides clear dashboards to help teams control usage and budgeting.

There is a free tier for learning or experimenting, allowing users to test the waters before incurring substantial costs. This flexibility is valuable for both solo developers and enterprises with strict procurement processes.

Safety, Security, and Ethical Focus

With the rapid advance and deployment of conversational and multimodal AI, safeguarding data and promoting ethical usage have become universal concerns. OpenRouter addresses these with:

- Robust data protection mechanics, making sure user prompts and results are handled securely.

- Transparent routing and logging so activity can be audited and traced as needed.

- Community and corporate oversight to foster responsible, beneficial use of AI technologies in all applications.

Integration with Ecosystem Partners and Third-Party Platforms

OpenRouter isn’t just a standalone service—it fits neatly into wider AI and cloud ecosystems. For example, when working with Cloudflare’s AI Gateway, you simply substitute your endpoint for a specific path and connect using your OpenRouter credentials, ensuring smooth compatibility with cloud-based AI orchestration.

Frequently Asked Questions: Clearing Up the Details

What distinguishes OpenRouter from providers like OpenAI?

OpenAI offers its own models (like GPT-4o), while OpenRouter is a unified gateway to hundreds of models—including, but not limited to, OpenAI’s catalogs.

How does OpenRouter handle credits and payments?

Usage is measured via a credit system, consolidating billing across all models and endpoints so developers avoid tracking costs for each individual provider.

What are the main benefits to teams using OpenRouter?

- Centralized access to models, streamlined billing, and robust API controls

- Automatic routing to optimize cost, speed, and uptime

- Support for multimodal input—text, images, PDFs, and more

- Reliable fallback mechanisms for maximum application resilience

Is OpenRouter free to use?

Sign-up and initial experimentation are free, but production-scale access involves model-specific costs and a modest platform fee. Budget-conscious users can test on the free tier before committing.

What routing strategies are available?

- Manual Routing: Specify a particular model for each request.

- Auto-Routing: Let OpenRouter automatically select based on your objectives—performance, cost, or speed.

- Fallback Routing: Automatically move down a prioritized list if the primary model is unavailable.

OpenRouter stands as a transformative force in the way developers, businesses, and researchers interact with AI models. By offering unified access to hundreds of LLMs, integrated multimodal support, intelligent routing, and enterprise-ready security, it not only simplifies the AI development process but opens the door for greater creativity and faster innovation. For anyone determined to build at the cutting edge of AI, OpenRouter is quickly becoming an essential tool in the toolkit.